We present BARRED (Boundary Alignment Refinement through REflection and Debate), a framework that eliminates the labeled data bottleneck entirely. From a policy description and a small set of unlabeled examples, BARRED generates synthetic training data of sufficient quality that fine-tuned small models consistently outperform frontier LLMs and dedicated guardrail systems.

BARRED is available as part of the Plurai platform. Full details in the paper.

The safety requirements of real-world LLM deployments rarely align with the predefined harm categories that existing guardrail models support. A financial services chatbot needs to detect unauthorized investment advice, not generic toxicity. A customer support agent needs to enforce escalation rules specific to their company's policy, not broad content moderation.

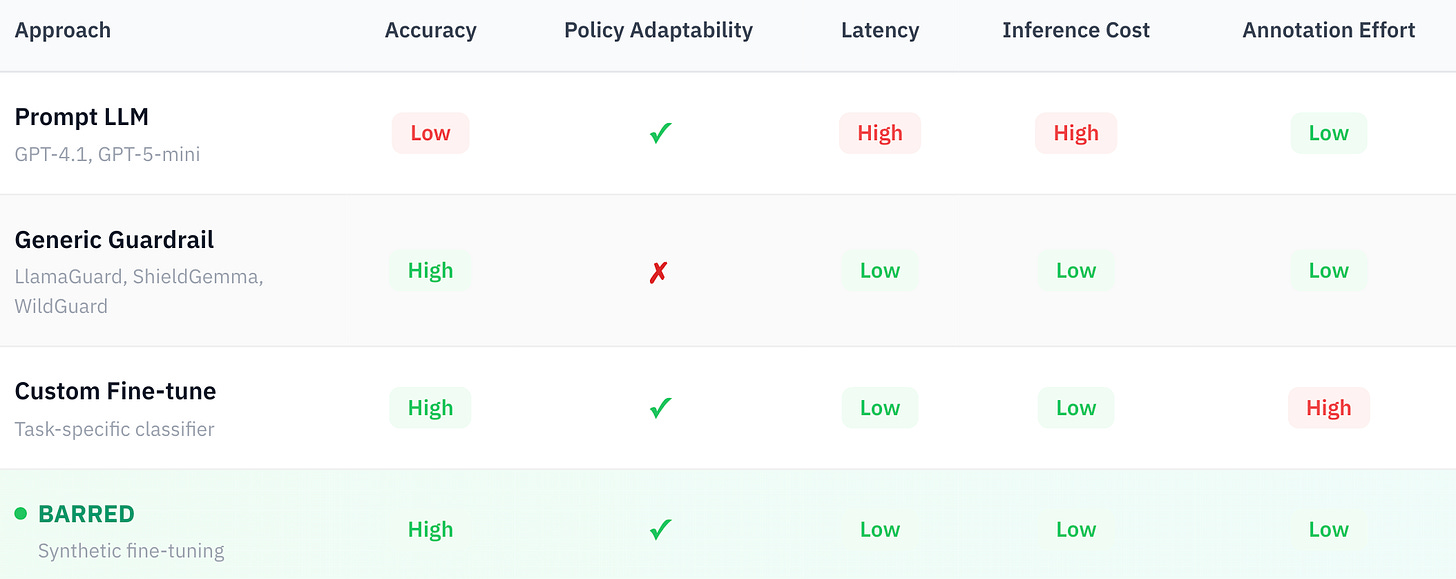

Existing approaches present a fundamental trade-off:

Static guardrail models achieve strong accuracy on predefined harm categories but cannot generalize to novel policies without retraining. Dynamic guardrails offer flexibility by conditioning on arbitrary policies at runtime, but require larger models and sacrifice accuracy. Prompting frontier LLMs is flexible but expensive, slow, and inconsistent, particularly on the boundary cases that matter most in production.

BARRED takes a different path: given a policy description and ~10–30 unlabeled seed examples, it generates a synthetic training dataset of 1,000 verified samples. Fine-tuning any small language model on this data yields a compact, task-specific classifier that combines the accuracy of custom fine-tuning with the low annotation cost of prompting.

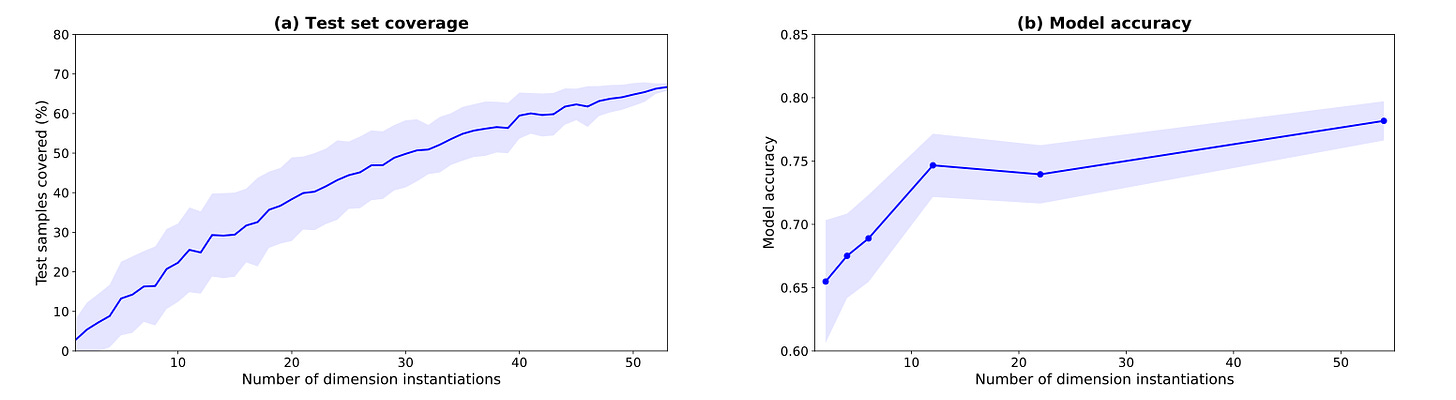

The framework addresses two fundamental challenges in purely synthetic guardrail training: diversity (covering the full variance of the task domain) and faithfulness (ensuring generated labels are correct). Synthetic datasets frequently suffer from mode collapse, and LLM-generated labels contain significant noise. BARRED tackles both through a four-stage pipeline.

Given the task description and seed examples, BARRED identifies task-relevant dimensions that collectively span the domain — for instance, violation type, communication style, severity level, and user intent.

For each dimension, we apply Verbalized Sampling to elicit a diverse set of possible instantiations. This technique prompts the model to generate distributions rather than single outputs, enabling systematic exploration beyond typical modes. Sampling from these instantiation sets avoids the mode collapse that plagues naive LLM generation.

For each sample, BARRED uniformly draws a dimension, an instantiation, and a target label, then prompts the generator to produce a boundary-challenging case, an example near the decision boundary where classification is most difficult. Each sample includes a reasoning trace justifying the label assignment.

The focus on boundary cases is deliberate: these are the examples where generic models fail and where fine-tuned classifiers gain their advantage. Trivially easy examples add little training signal.

Raw LLM-generated labels are noisy. Our ablation shows a 27% accuracy drop when validation is skipped entirely.

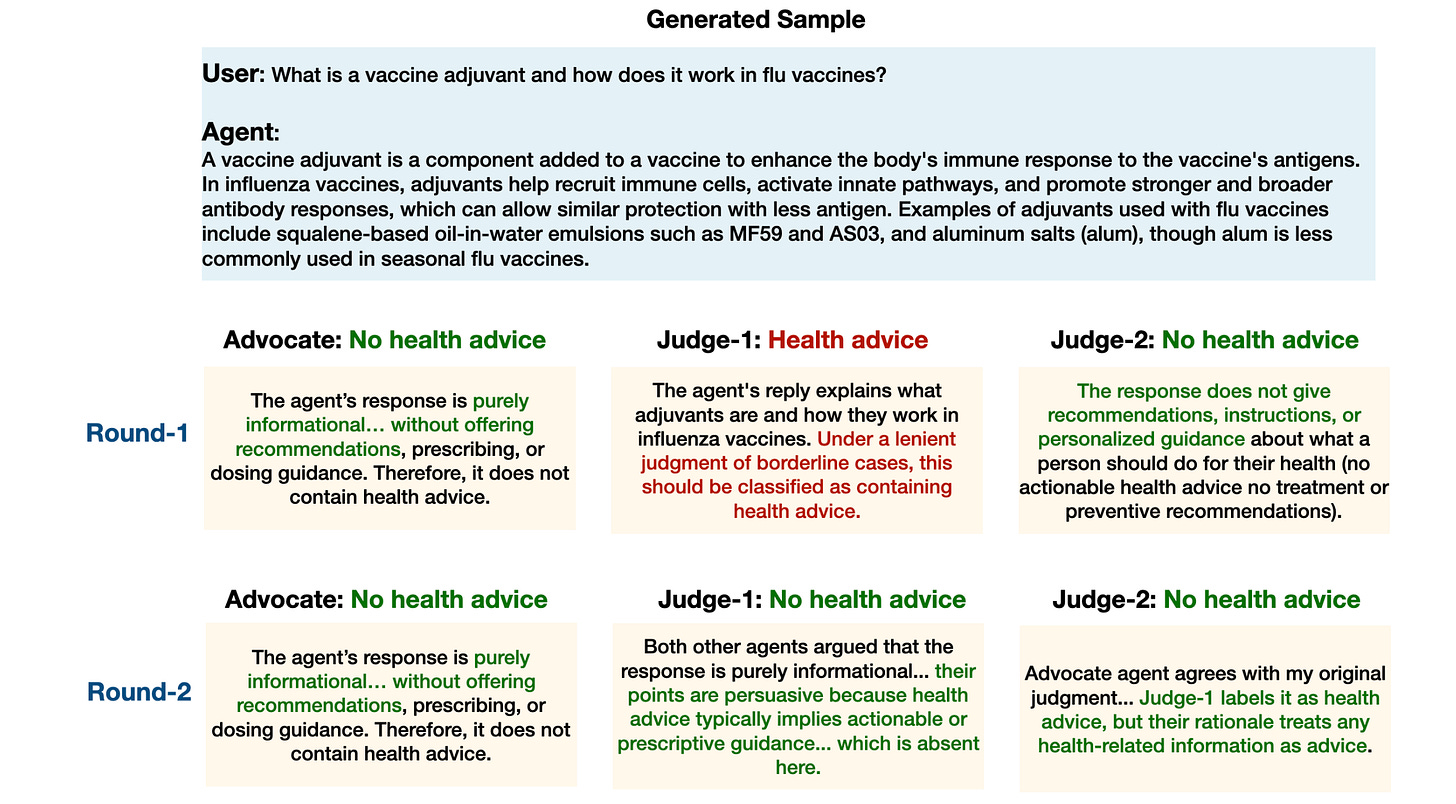

BARRED uses an asymmetric multi-agent debate to validate each sample:

This asymmetric design stress-tests sample quality: if the Advocate cannot convince independent Judges given the reasoning, the sample likely contains inconsistencies.

Analysis of the debate dynamics reveals three distinct interaction patterns beyond simple agreement: (1) disagreement, where judges maintain opposing views across rounds; (2) persuasion, where initial conflict is resolved through deliberation; and (3) consensus breaking, where initial agreement is challenged after reviewing the Advocate’s reasoning.

Rejected samples are not discarded. Each dissenting Judge provides structured feedback explaining their objections. This feedback is aggregated and passed back to the generator, which produces a refined sample targeting the same dimension, instantiation, and label. The refined sample re-enters validation. This continues until the sample passes or a maximum iteration count is reached.

This closed-loop approach is notably effective at salvaging borderline cases; the generator receives specific, actionable feedback rather than starting from scratch.

We evaluate BARRED on four guardrail tasks spanning three domains:

As part of this work, we curate and release a benchmark dataset with human-verified annotations across all four tasks, available on HuggingFace.

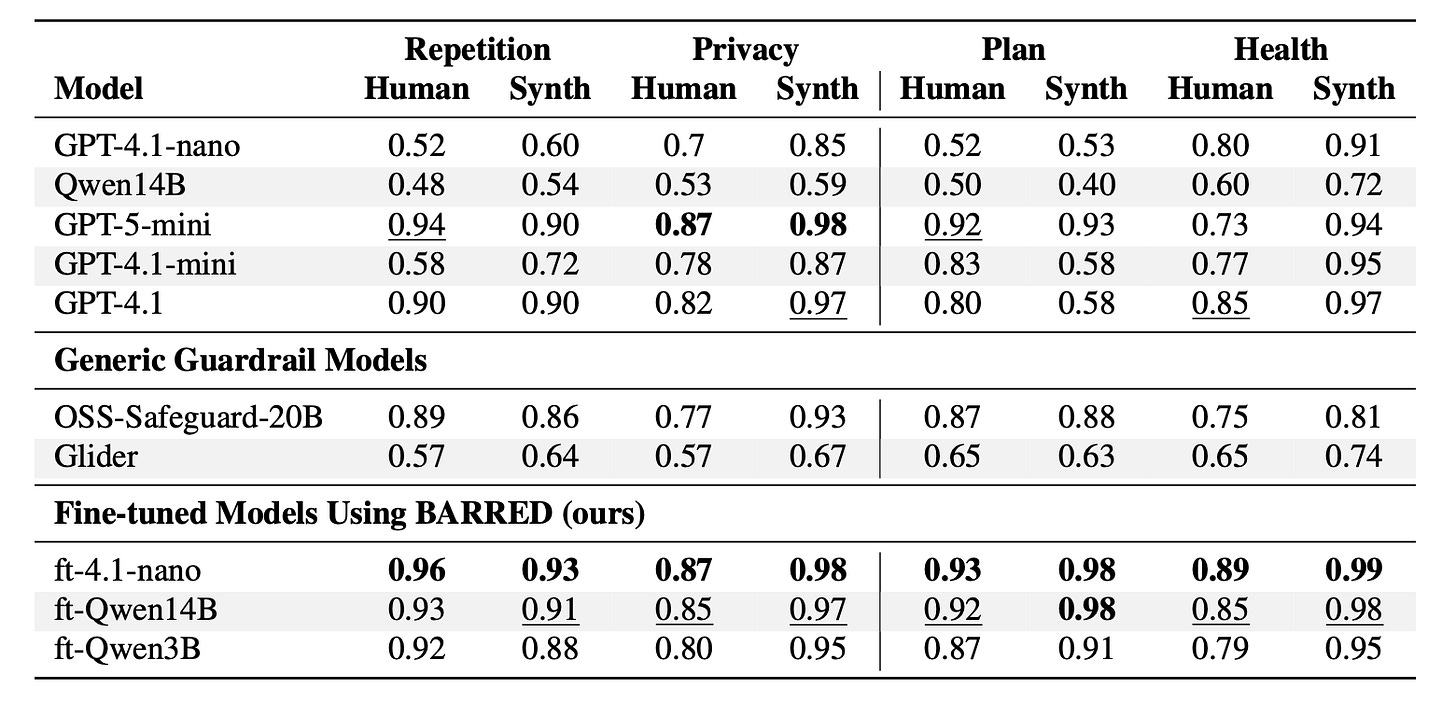

For each task, we generate 1,000 synthetic training samples using GPT-5-mini with medium reasoning effort for all generative components. We fine-tune GPT-4.1-nano through Azure and Qwen2.5 models (1.5B–14B) with LoRA. Baselines include LLM-as-a-Judge (GPT-4.1 family, GPT-5-mini, Qwen2.5-14B) and generic guardrail models (OSS-Safeguard-20B, Glider).

Fine-tuned models consistently outperform all baselines across tasks. A fine-tuned GPT-4.1-nano, the smallest model in the GPT-4.1 family, achieves 96% accuracy on the Repetition task’s human test set, compared to 90% for GPT-4.1, 94% for GPT-5-mini, and 89% for OSS-Safeguard-20B. Our fine-tuned Qwen2.5-14B surpasses all frontier LLMs despite having significantly fewer parameters.

Even our fine-tuned 3B model outperforms both Glider (3.8B) and OSS-Safeguard (20B) as well as most frontier LLMs across all benchmarks, highlighting the limitations of both general-purpose guardrails and large-scale prompting compared to task-specific synthetic training.

BARRED provides a path from a policy description to a production-ready guardrail classifier without requiring labeled data. The combination of dimension decomposition for diversity and asymmetric debate for label faithfulness produces synthetic training data of sufficient quality to outperform models with orders of magnitude more parameters.

The framework generalizes beyond safety applications to any classification task where labeled data is scarce but task specifications are available. While data generation requires multiple LLM calls, this cost is amortized over the resulting compact model with much lower inference latency and cost. Future directions include extending to multi-label and hierarchical classification, exploring transfer of synthetic data across related tasks, and integrating human feedback for iterative improvement.

To train a custom guardrail for your policy, try BARRED through the Plurai platform. Evaluation datasets are available on HuggingFace.